Do your industrial data streams deliver reliably, completely, and at the right quality? In practice, the Unified Namespace (UNS) provides the infrastructure for a centralized data flow – but without clear operational discipline and governance, the UNS remains an uncontrolled data channel. This is exactly where Industrial DataOps comes in. This article explains what Industrial DataOps means, what role it plays in the UNS, and which concrete steps will get you started in practice.

What is Industrial DataOps?

Industrial DataOps is a collaborative discipline for the continuous management of industrial data pipelines – from data capture at the machine to consumption in analytics, MES (Manufacturing Execution System), or ERP (Enterprise Resource Planning) systems. The term applies DevOps principles – automation, quality assurance, and shared responsibility – to the world of shop floor and industrial data.

| DataOps Principle | Meaning in an Industrial Context |

|---|---|

| Automation | Operating data pipelines without manual intervention |

| Quality Assurance | Validating and sanity-checking every data point at ingestion |

| Observability | Full visibility into data status, latency, and pipeline failures |

| Collaboration | OT and IT teams share ownership of data flows |

| Iteration | Continuously improving pipelines rather than treating them as one-time deployments |

Important: Industrial DataOps is not a software component you install. It is an operational discipline that defines how teams handle industrial data – both technically and organizationally.

The Role of Industrial DataOps in the Unified Namespace

The UNS provides the infrastructure for a centralized, hierarchical data stream. All systems – from the PLC (Programmable Logic Controller) to the ERP – publish and consume data through a shared message broker, such as NATS or MQTT. This eliminates point-to-point connections between individual systems, and all data is available as a Single Source of Truth (SSOT).

But the UNS alone does not answer the critical question: is this data correct, complete, and consistent? A sensor can deliver faulty readings. PLCs can drop connections. A payload schema can change without consumers being notified. Industrial DataOps fills exactly this gap: it defines how data in the UNS is maintained, validated, and reliably delivered to every consumer.

The relationship can be stated precisely:

- Unified Namespace: infrastructure – the data channel

- Industrial DataOps: operational discipline – the quality and governance layer for that channel

Both layers are complementary. A UNS without DataOps practices is technically functional, but operationally fragile and unable to scale reliably across sites or systems.

Core Responsibilities at a Glance

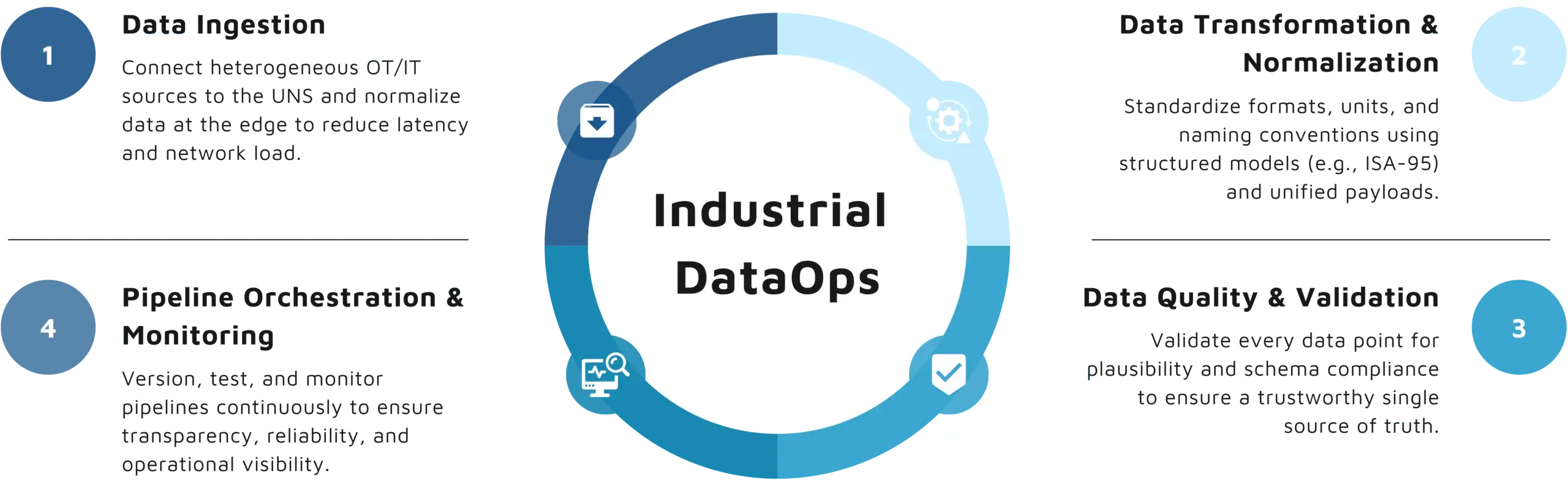

Industrial DataOps in the UNS spans four key areas that together establish a trustworthy data foundation:

1. Data Ingestion

Industrial data originates from heterogeneous sources: PLCs, SCADA systems (Supervisory Control and Data Acquisition), sensors, and higher-level systems such as MES or SAP. The ingestion layer connects these sources to the UNS and normalizes raw data at the point of entry. Proximity to the source is critical: edge processing directly at the machine reduces unnecessary network traffic, lowers latency, and increases the overall responsiveness of the system.

2. Data Transformation and Normalization

Raw data from different OT sources is rarely directly compatible. IT/OT architects must harmonize formats, engineering units, and naming conventions before consumers can work with the data reliably. In the UNS, this is accomplished through standardized data models – typically based on the ISA-95 hierarchy (Enterprise / Site / Area / Line / Cell) and unified payload formats such as JSON.

3. Data Quality and Validation

Every data point should be actively validated at ingestion: is the value within a plausible range? Does it match the expected schema? Is it a genuine measurement or a statistical outlier? Faulty records are isolated and flagged – not silently discarded or passed downstream. Only then can consumers reliably treat the UNS as the authoritative SSOT for operational and business decisions.

4. Pipeline Orchestration and Monitoring

Data pipelines should be treated like software artifacts: versioned, tested, and continuously monitored. Observability – the ability to assess the state of a pipeline from the outside without deep inspection – is the central instrument here. When did specific data last arrive? Which topics have been silent for hours? What is missing downstream? Without monitoring, even a well-architected UNS becomes an operational black box.

Getting Started: Five Steps to Industrial DataOps in Practice

The practical introduction to Industrial DataOps works best iteratively. These five steps establish a solid operational foundation:

- Map data sources and consumers: Which systems publish data into the UNS? Which systems consume it? And which data points are business-critical? This map forms the baseline for all architectural and governance decisions that follow.

- Define topic structure and data model: Establish a consistent topic hierarchy based on ISA-95. Example:

plant01/assembly/line01/process01/temperature. A well-defined, consistent model is the prerequisite for reliable, maintainable pipelines at scale. - Define validation rules per data type: For each relevant data point, specify what constitutes a valid value range and what schema is expected. These rules are enforced directly at ingestion – before faulty data enters the UNS and propagates to downstream consumers.

- Set up monitoring and alerting: Missing or malformed messages must be surfaced immediately. Even a simple dashboard showing which topics are active and which have gone silent significantly improves operational reliability and mean time to detect (MTTD).

- Expand iteratively: Industrial DataOps is not a one-time deployment. Pipelines evolve continuously: new sources are connected, validation rules are refined, and monitoring coverage is extended step by step.

i-flow as an Industrial DataOps Platform in the UNS

i-flow provides an end-to-end platform for Industrial DataOps in the UNS. The architecture follows a clear principle: configure centrally in the i-flow Hub – execute locally via i-flow Edges, close to the source.

- The i-flow Hub serves as the central management layer. Data models, transformation rules, validation logic, and connectivity configurations are all defined here. Changes are rolled out consistently to all Edge instances – with no manual intervention required on-site.

- The i-flow Edge executes these configurations directly at the source. It connects to PLCs, SCADA systems, and sensors via native industrial protocols such as OPC UA, Modbus, or Siemens S7, transforms data locally, and publishes it in normalized form to the UNS. This keeps processing close to where data originates – essential for offline scenarios or environments where minimal latency without unnecessary traffic toward the cloud or data center is a hard requirement.

- The i-flow Broker provides the central message broker for the UNS – based on NATS and MQTT – and ensures reliable, ordered delivery of all data points between producers and consumers. The interplay of Hub, Edge, and Broker forms the technical foundation for a production-ready Industrial DataOps operation.

Conclusion

Industrial DataOps makes the Unified Namespace truly scalable. The UNS provides the infrastructure for a centralized data stream – DataOps practice defines how that stream is operated reliably, with guaranteed data quality, and on a continuous basis. Without this operational discipline, even a well-designed UNS remains an ungoverned data channel that consumers cannot fully trust for critical decisions. Getting started works iteratively: map your sources, define the data model, enforce validation at ingestion, build monitoring, and expand step by step. With i-flow, all of these steps can be implemented end-to-end – from central configuration in the Hub to decentralized execution directly at the data source.