In today’s connected factories, efficient data integration is key to optimizing production. The Unified Namespace (UNS) provides a scalable foundation by enabling communication across devices and systems from different vendors. While UNS is widely recognized as a robust data infrastructure, developing advanced Industry 4.0 applications remains complex. Diverse requirements, ranging from real-time streaming and batch processing to big data analytics and AI-driven predictions, must be addressed. This whitepaper shows how a Distributed Unified Namespace (UNS) Architecture (#SharedUNS) overcomes these challenges.

The Industrial Unified Namespace (UNS)

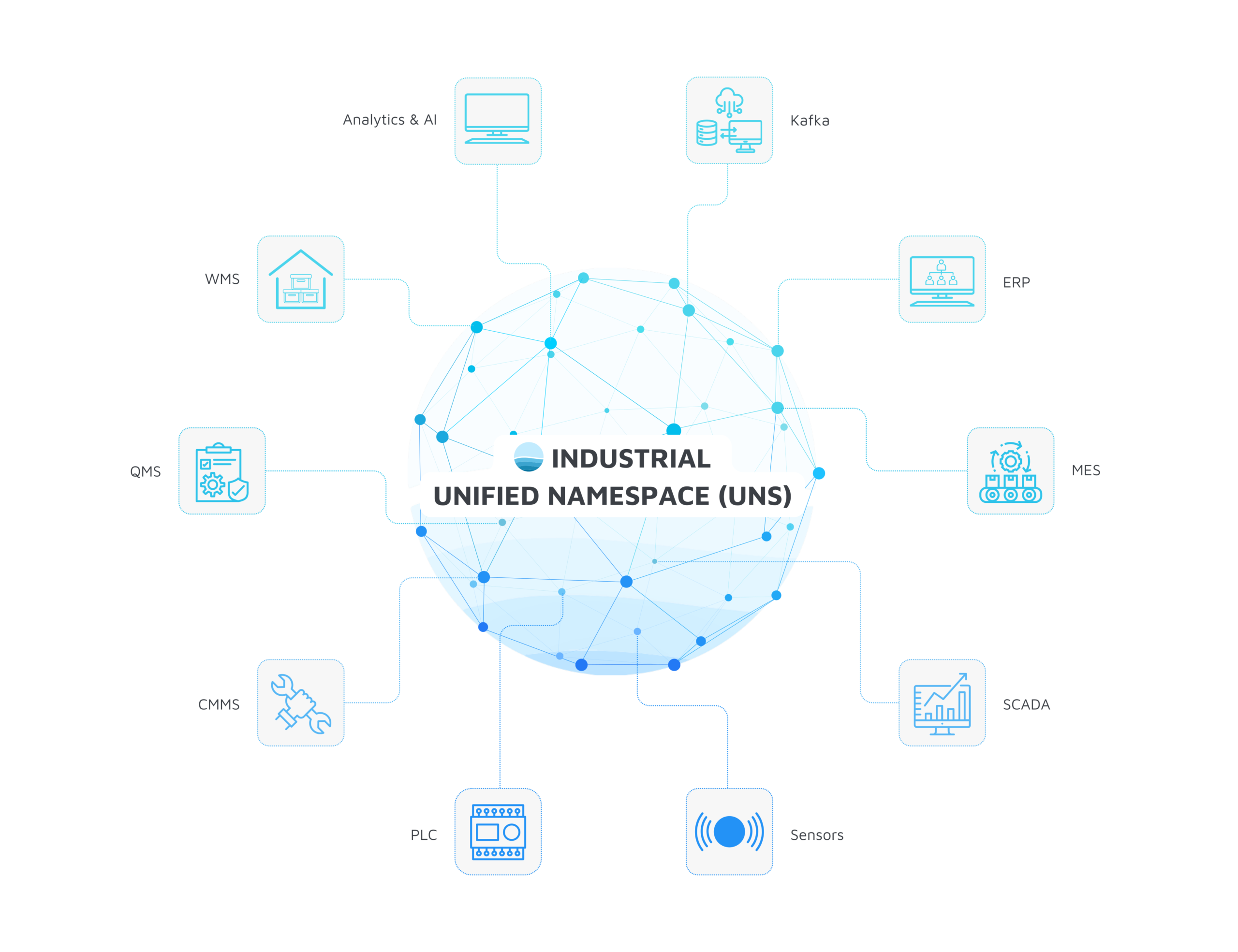

Modern industry generates vast amounts of data, from sensor readings and production metrics to enterprise-wide ERP information. Yet much of this data remains locked in silos, isolated across systems that communicate only in limited ways. The Industrial Unified Namespace (UNS) breaks down these barriers. It provides a standardized, real-time data interface, creating the foundation for truly connected, data-driven production.

What is the UNS?

An Industrial Unified Namespace (UNS) acts as the single source of truth for all data in an industrial environment. It structures information in a uniform, hierarchical namespace and makes it available in real time. Technically, this is typically implemented via message brokers (e.g. MQTT) that serve as the central data hub. Data producers such as sensors or PLCs publish their information to the broker, while data consumers like MES or analytics platforms subscribe to it. Communication is decoupled, event-driven, and based on the publish/subscribe pattern. This approach eliminates numerous point-to-point interfaces and replaces them with a central integration layer. Further information can be found here.

Key Aspects and Advantages of the UNS

The UNS offers numerous advantages, including seamless data integration, interoperability, scalability and an open architecture. These advantages help to increase the efficiency of data management and optimize business processes.

- Single source of truth: By bringing together real-time data and historical data in a single, central repository, the UNS enables standardized data exchange for all systems and users. This leads to improved collaboration and more efficient operational processes, as information is available quickly and accurately.

- IIoT integration: Through the direct integration of Industrial IoT (IIoT) devices, the UNS supports the collection and analysis of data in real time. This enables proactive maintenance, predictive analysis and process optimization.

- Interoperability with IT systems: In the UNS, systems from different manufacturers can communicate with each other via a standardized interface – regardless of whether they are IIoT devices, MES or ERP systems. This enables end-to-end automation, process optimization and data-based decision-making.

Challenges of a Centralized Architecture

Despite the numerous advantages, the introduction of a UNS brings with it organizational and technical challenges. Further information on organizational challenges can be found here. A central technical challenge is ensuring a high-performance, scalable and secure data infrastructure that meets the requirements of different use cases. Use cases often have individual requirements in terms of reliability, latency, data structure, data formats, etc. How can a central namespace guarantee this individuality? In addition, the target users of the data differ, which makes standardization and data harmonization within the UNS more difficult.

Last but not least, the integration and combination of different data processing concepts such as streaming, batch and big data often present companies with challenges.

1. Streaming Data

- Data processing: Streaming data refers to continuous data streams that are captured and processed in real time. This type of data is particularly useful for applications that require immediate response, such as real-time monitoring, IIoT and process control. Streaming data is often captured and processed in small, incremental chunks, which allows for quick analysis and response.

- Storage: If storage is required, the real-time data is stored in low-latency databases such as TimescaleDB or InfluxDB. These are specially optimized for time-series-based data streams. Short-term storage in the cache can also be useful to ensure fast access. If necessary, this real-time data can flow into the data warehouse in parallel.

- Example: One example of the use of streaming data is the monitoring of production lines in a factory. Sensors on the machines continuously record data such as temperature, pressure and speed. This data is sent in real time to a central system that detects anomalies and takes immediate action to prevent breakdowns and increase efficiency.

- Challenge in the UNS: The biggest challenge is the combination of real-time and batch or historical data. The UNS must be able to link continuously incoming data with existing information – without additional latency or data inconsistencies. Mechanisms such as event-driven architectures and data stream semantics (exactly-once processing, at-least-once processing) must also be taken into account.

2. Batch Data

- Data processing: Batch data is processed at regular intervals and follows fixed load cycles. Typically, processing is carried out using ETL processes (Extract, Transform, Load), which extract data from operational systems, transform it and transfer it to structured storage.

- Storage: Mostly in relational databases, which enable structured storage with predefined schemas. These databases use tables to store data in an organized and easily accessible way. Relational databases are ideal for applications that require complex queries and transactions, such as ERP, CRM systems and KPIs.

- Example: Batch or relational data enables companies to carry out comprehensive analyses and make well-founded decisions. For example, a company can analyze production times across different systems in order to identify trends and eliminate problems.

- Challenge in the UNS: The challenge lies in synchronization with real-time data. Example: The integration of polling data (batch data) in event-based data (streaming data). Batch data must be integrated into the UNS in such a way that it harmonizes with streaming data and there are no information gaps between update cycles.

3. Big Data (e.g. Data Lakehouse Architecture)

- Data processing: Big data refers to large volumes of unstructured or semi-structured (raw) data that originate from various sources and are available in large volumes, high speed and great variety. This data requires special technologies for processing and analysis, such as Apache Spark or Hadoop, which enable parallel data processing.

- Storage: This data is often stored in a data lakehouse. A data lakehouse combines the advantages of a data lake and a data warehouse by storing and processing both structured and unstructured data. It provides a unified platform for data management, allowing companies to use their data more efficiently.

- Example: By integrating real-time and historical data, companies can continuously monitor and optimize production processes. A data lakehouse can help identify bottlenecks, analyze the causes of quality problems and maximize resource utilization. The combination of real-time and historical data makes it possible to create accurate predictive models to better plan future production volumes and make supply chains more efficient.

- Challenge in the UNS: The challenge is to standardize heterogeneous data formats and enable queries across different systems. The aim here is not to integrate all big data information into the UNS, but to harmonize and make available relevant information such as sensor data.

An effective architecture design must therefore combine scalable storage solutions, high-performance processing and intelligent integration mechanisms to bring together the various data streams and enable a holistic view of industrial processes.

Distributed Unified Namespace (UNS) Architecture

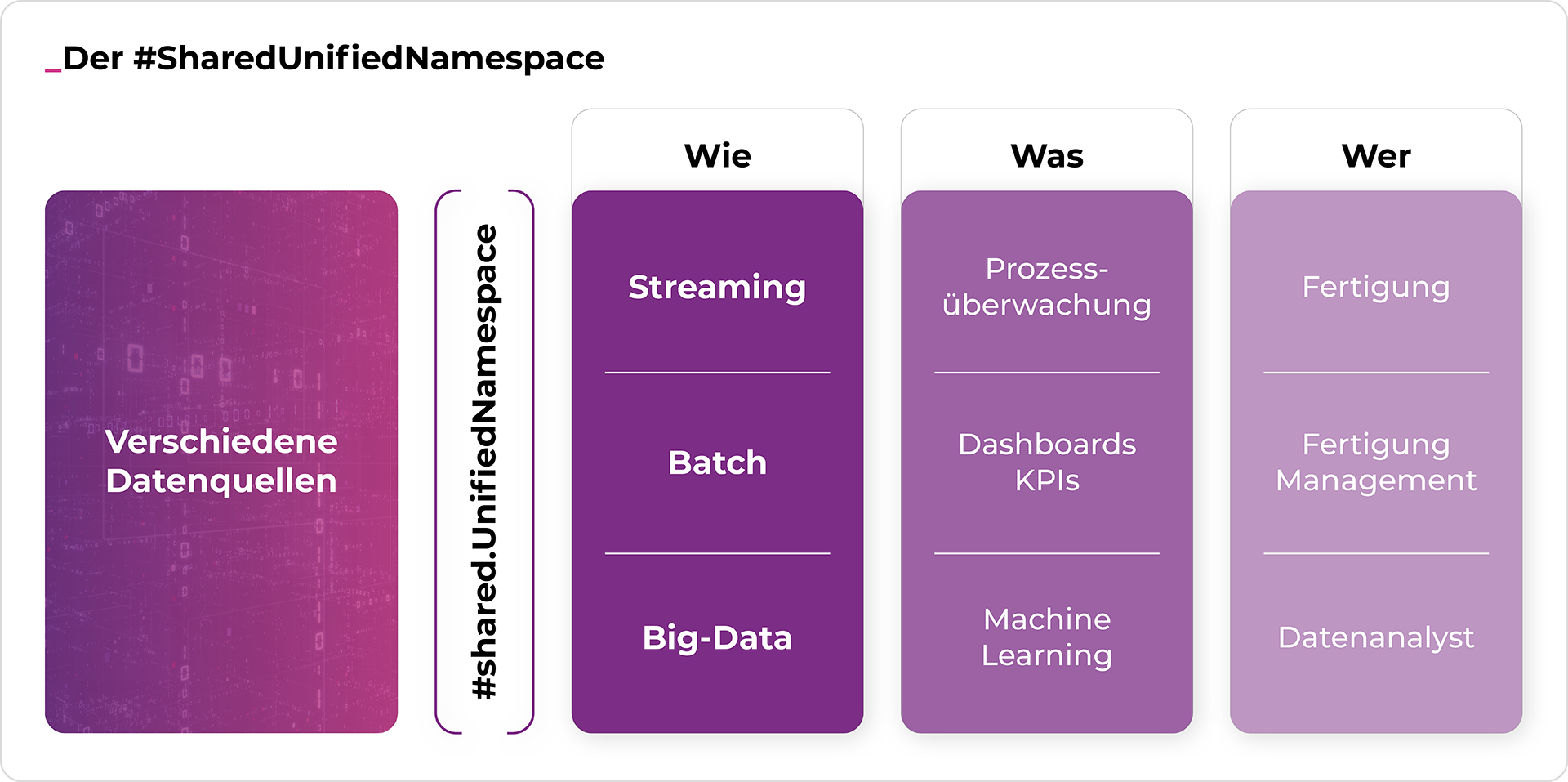

The Distributed Unified Namespace (UNS) Architecture (#SharedUnifiedNamespace) directly addresses these challenges by emphasizing decentralization and differentiated data flows. Unlike the traditional UNS with a central message broker, this approach relies on a decentralized, application-oriented design.

What is the Distributed Unified Namespace (UNS) Architecture?

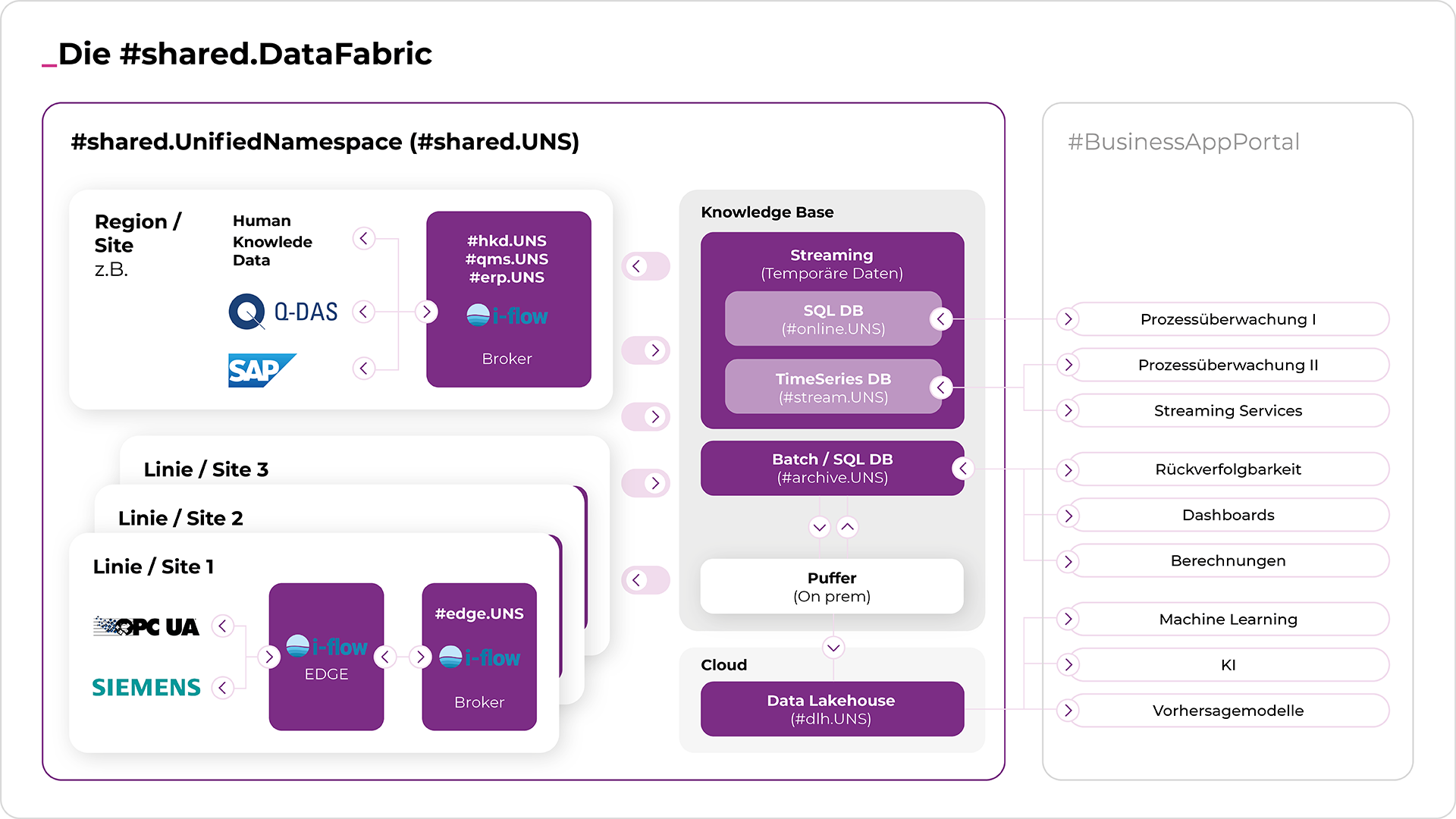

While the UNS is commonly viewed as a central broker, the distributed Unified Namespace architecture follows a decentralized model. In practice, this means multiple specialized UNS instances, known as #edge.UNS, exist at the edge, each tailored to different systems and production environments. Each #edge.UNS represents the structure of a cell, line or plant using a standardized data model, independent of specific use case requirements. While the structure of the #edge.UNS remains consistent across system types, the transmitted channels (descriptions) may vary. This approach enhances scalability and reusability.

A higher-level system or broker can aggregate these local namespaces when necessary, depending on the use case. By not predefining the individuality and dynamics of use cases in the #edge.UNS namespace, the complexity of creating and managing the UNS is significantly reduced. The proven principle of late variant creation boosts both efficiency and flexibility in production, while optimizing the data infrastructure for agile and scalable use.

Splitting into Streaming, Batch and Big Data

In addition to machines and systems in #edge.UNS, enterprise systems (e.g. ERP, QMS) or employees can be further UNS data sources. These are structured in namespaces such as #erp.UNS, #qms.UNS or #hkd.UNS. Human knowledge data in the #hkd.UNS namespace is one of the most important data sources for integrating additional knowledge (personal knowledge and personal experience from production). Human knowledge helps to structure and interpret data. Only experts can define processes, e.g. to link quality data (#qms.UNS) with data from the systems (#edge.UNS) and the ERP (#erp.UNS) in order to derive weak points in the production processes, for example.

From the #edge.UNS, i-flow splits the data into relevant data streams. Individual values are automatically optimized according to a predefined method for specific services and then published in the respective data model:

- #batch.UNS – for structured, relational data (e.g. BDE data for calculating the OEE).

- #dlh.UNS – for big data applications, in particular machine learning and predictive analyses (e.g. process data for predicting component quality).

- #stream.UNS – for real-time data streams that are used for immediate reactions and monitoring applications (e.g. sensor data for system monitoring).

This enables the targeted integration of relevant data into the respective use case. The individual services can be optimized with regard to their individual requirements in terms of criticality, latency, redundancy, etc. For example, by optimizing the amount and format of data, the latency time for specific services can be significantly reduced. This can be of great importance for production-critical use cases, such as traceability. A system may not be able to continue production until relevant process and quality data has been written to a database.

Business Applications in the SharedUNS

Corresponding applications and dashboards analyse and visualize the data from the three data services. Dashboards and real-time analyses are an essential part of the unified namespace architecture and ultimately aim to increase the efficiency of production. By visualizing data, potentials can be identified and well-founded decisions can be made.

Implementation of a Distributed Unified Namespace Architecture

The implementation of the distributed unified namespace architecture (SharedUNS) takes place in several steps:

- Identification of the use case: The first step is to identify use cases for the SharedUNS.

- Economic analysis of the use cases: An economic analysis of the identified use cases is necessary in order to evaluate the benefits and costs of implementation.

- Integration of machines and systems: Once the use cases have been identified and the economic evaluation has been completed, companies connect the first machines and enterprise systems to the UNS. i-flow helps to facilitate integration and ensure consistent data transfer.

- Define the data structure for the UNS.EDGE: An important step is to define a standardized data structure according to a process model. Here, i-flow can be used to model the machine data according to the defined model.

- Development of the first data services: This includes the development and implementation of data services that process, store and analyze the collected data.

- Real-time data analysis and visualization: Powerful dashboards and analysis tools make it possible to visualize and analyse the collected data in real time.

Conclusion

The digital transformation of industrial production demands a flexible and future-proof data architecture. The Industrial Unified Namespace (UNS) has become a central solution for connecting, integrating, and harmonizing production data. Building on this foundation, the Distributed Unified Namespace (UNS) Architecture (SharedUNS) provides companies with an efficient and scalable approach to managing data and optimizing processes. By separating data streams into streaming, batch, and big data, SharedUNS reduces structural complexity while addressing specific processing requirements. The result is a modular and powerful data strategy that integrates seamlessly into Industry 4.0 concepts. With structured implementation through i-flow and TT PSC, companies can ensure effective and reliable deployment.

Why i-flow and TT PSC?

- Experience and expertise: With 30 years of experience in the automotive industry, including working with various OEMs, we have been developing standard solutions for manufacturing for a long time.

- Long-standing basic principles: Our methods and principles have been proven and established for decades. SharedUNS is based on this experience and the constant further development of systems and technologies.

- Enterprise environments: We specialize in complex enterprise environments and know the specific challenges.

- Familiarity with data models: We know relevant data models, have derived customer-specific models from them and can apply them effectively.

- Systematic approach and toolbox: Our systematic approach and our tried-and-tested software toolbox guarantee efficient solutions.

- No vendor lock-in: Our solutions are independent of large legacy software solutions and use existing systems based on microservices.

- Data expertise: Our experts for data types and Industrial DataOps optimize data streams in collaboration with production experts. At the same time, we come from the store floor and understand your challenges, processes and people.

- No vendor lock-in: Our solutions are independent of existing software providers and use existing systems. We bring a proven system and a structured process model to the table.

- Basis and collaboration: We provide the basic infrastructure, while your team works with us to make the specific adjustments required for implementation in your environment.

- Scalability and load tests: We offer scalable solutions and routinely carry out comprehensive load tests.

- Demo environment: Our demo environment can integrate and test your data to demonstrate the functionality.

TT PSC is an international system integrator with headquarters in Munich. By combining our technological knowledge in the areas of cloud, AI and streaming with our production and data expertise, we offer optimized solutions for manufacturing and production processes. We have more than 30 years of experience in the field of the digital factory. Our customers include major German car manufacturers and well-known companies in the supplier industry.